Together AI

Fast inference API for open-source AI models — run Llama, Qwen, Mistral, DeepSeek, and others at production speed without infrastructure overhead

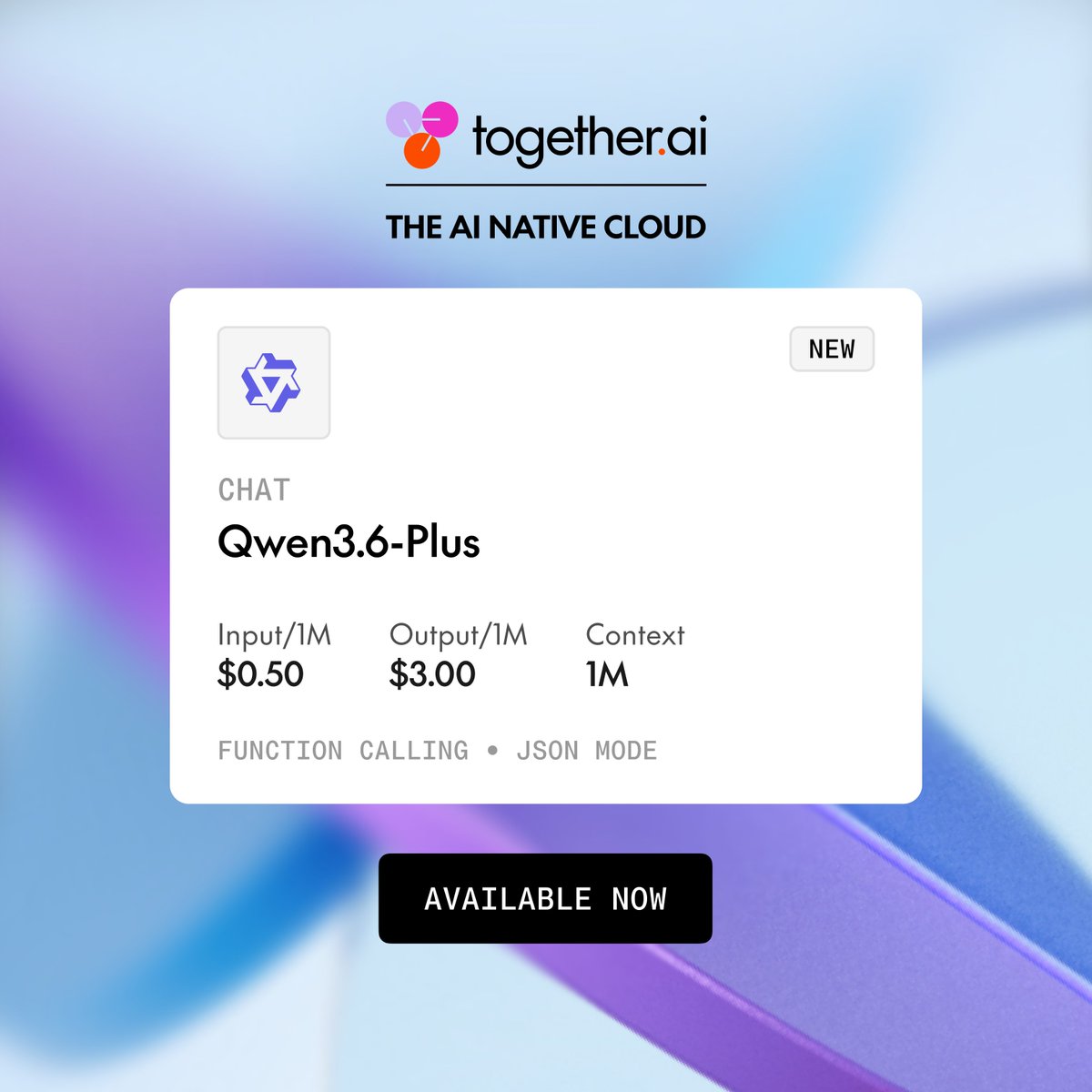

Pay-as-you-go; pricing varies by model (from $0.10/1M tokens for small models)

Overview

Together AI is a high-performance inference platform for open-source AI models. It specializes in fast, affordable inference for the leading open-weight LLMs — often significantly cheaper and faster than OpenAI equivalents for comparable open-source models.

Key Features

- Inference API for 200+ open-source models including Llama, Mistral, DeepSeek, and Qwen

- Together Dedicated: reserved capacity for consistent latency SLAs

- Fine-tuning pipeline for custom model training on your data

- Mixture of Agents: combine multiple models for better outputs

- OpenAI-compatible API — drop-in replacement for most applications

- Enterprise-grade reliability and compliance

Pricing: Pay-as-you-go; Llama 3.1 8B from $0.10/1M tokens; larger models priced higher.

Pros

- Significantly cheaper than OpenAI for equivalent open-source models

- OpenAI-compatible API — easy migration

- Fast inference with competitive latency

- Fine-tuning and custom model training built in

Cons

- Open-source models still trail GPT-4o and Claude on complex reasoning

- Fine-tuning pipeline requires ML knowledge

- Fewer safety guardrails than closed model providers

Tags

Product Updates

Similar Tools

Groq

AI inference hardware and API provider delivering ultra-fast LLM responses — built on custom LPU chips for real-time AI applications

Replicate

Cloud platform for running and deploying open-source AI models with a simple API — access Flux, Stable Diffusion, Llama, and thousands more

claude-mem

Persistent memory plugin for Claude Code that captures and compresses session context

Falcon

Open-source foundation models from the Technology Innovation Institute in Abu Dhabi — among the first truly open, commercially licensed large language models